Often when working with 3rd party docker images I have a desire to see what they contain. Luckily that is super doable, as you can simple unpack the images and see all the content of the different layers. In most cases that is easier than getting a bash shell into the container and browsing around that way.

Here is a few of the commands that I normally use. As an example image I’m looking at the Dockerized version of Azure Cognetive Services mcr.microsoft.com/azure-cognitive-services/vision/read:3.2-preview.2

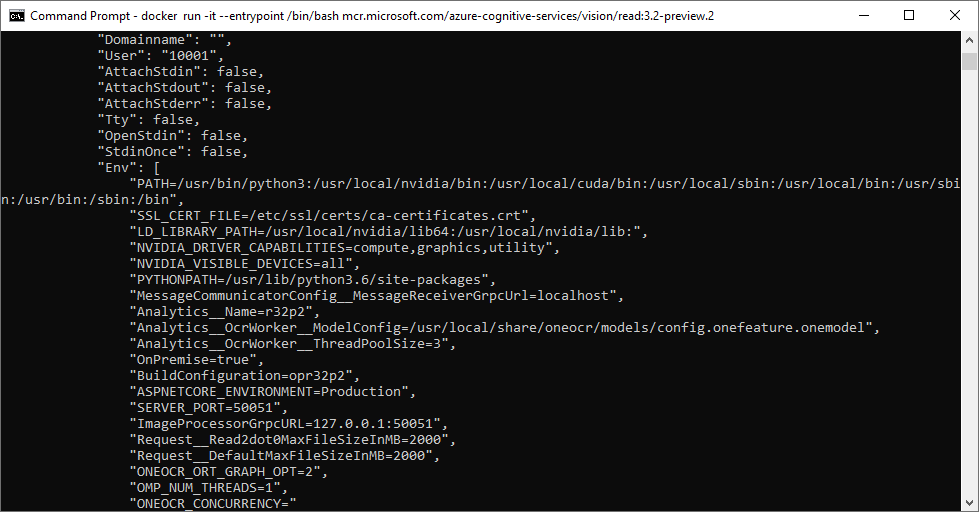

docker inspect mcr.microsoft.com/azure-cognitive-services/vision/read:3.2-preview.2The inspect command is used to see how the structure of the docker image, environment variables configured, the entrypoint and number of layers etc.

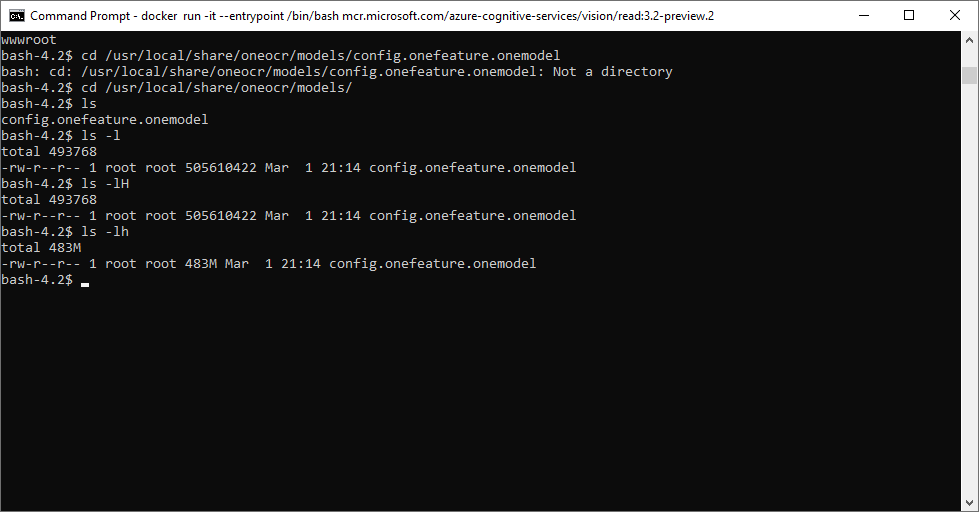

Normally the first thing I do then it so start the container with an entrypoint override so I just get dropped into an interactive bash shell.

docker run -it --entrypoint /bin/bash mcr.microsoft.com/azure-cognitive-services/vision/read:3.2-preview.2Now I just look around in the image to see if it looks worth explorering more.

Lets say I want to export this file from the docker image, then I could do so using

docker cp 86aa4eec1c90:/usr/local/share/oneocr/models/config.onefeature.onemodel config.onefeature.onemodelHere 86aa4eec1c90 is the container id of the running container.

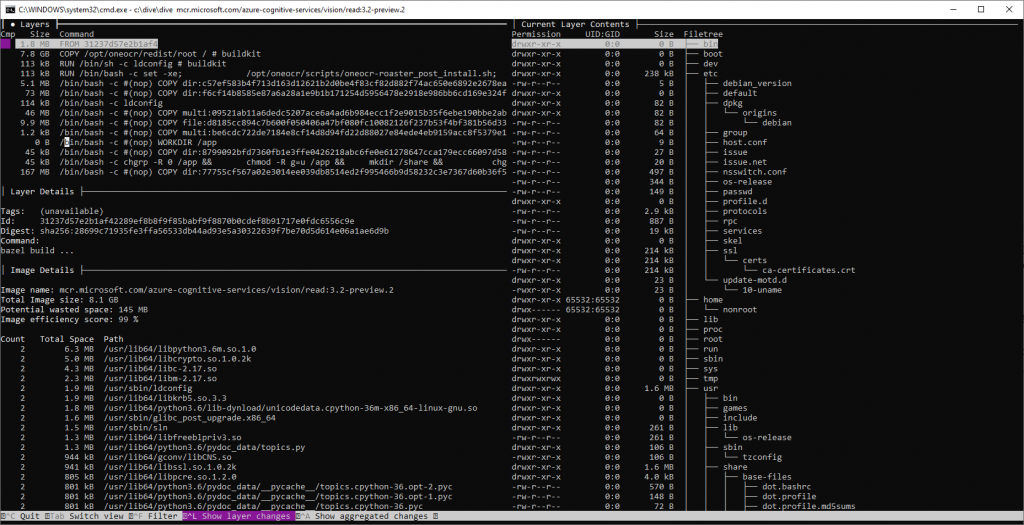

Another great way to explorer the contents of an docker image is using the open source tool dive. Dive allows you to browse the content, with the purpose of optimize image sizes by detecting large or unnecessary files. But it is good as an investigation tool. It is a bit slow at starting for large images the azure cognetive image is 8 GB, so on my machine it takes a couple of minutes before dive is ready.

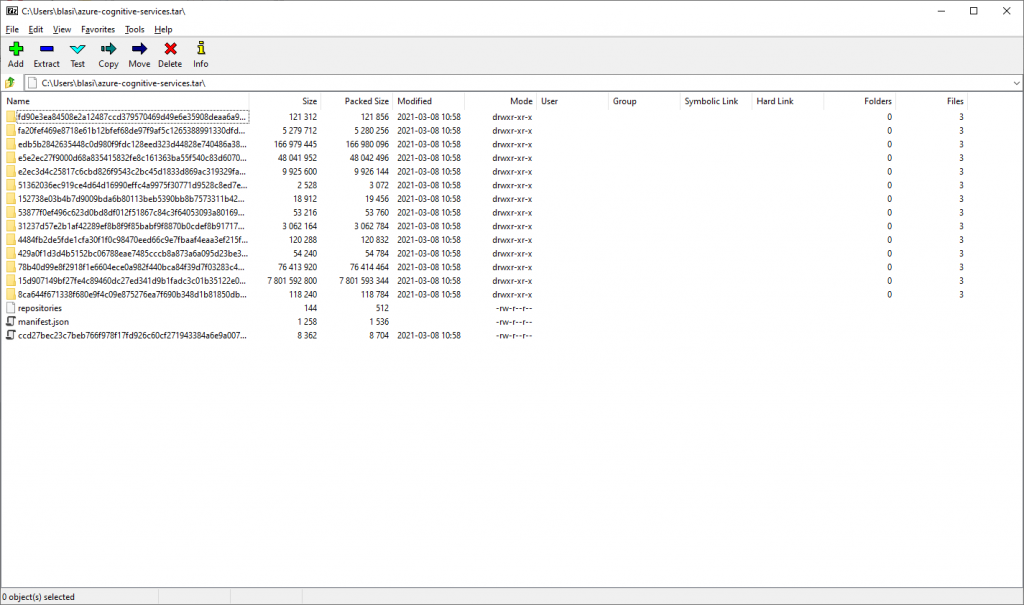

Finally if I decide that an image contains a lot of interesting files that I want to extract I use docker save to export all the contents of the image to a tar file.

docker save mcr.microsoft.com/azure-cognitive-services/vision/read:3.2-preview.2 > azure-cognitive-services.tarThis dumps all the contents of all the layers into a tar ball that can be extracted, and worked with.

If you want to dump the contents of a running container (e.g. because it has generated some data at runtime), you can use docker export in the same way as docker save. Again the time it takes depends largely on the image size and how fast your disks are (I wouldn’t recommend trying this on a hard disk).